Digital age platforms are providing researchers the ability to outsource portions of their work – not just to increasingly intelligent machines, but also to a relatively low-cost online labor force comprised of humans. These so-called “online outsourcing” services help employers connect with a global pool of free-agent workers who are willing to complete a variety of specialized or repetitive tasks.

Because it provides access to large numbers of workers at relatively low cost, online outsourcing holds a particular appeal for academics and nonprofit research organizations – many of whom have limited resources compared with corporate America. For instance, Pew Research Center has experimented with using these services to perform tasks such as classifying documents and collecting website URLs. And a Google search of scholarly academic literature shows that more than 800 studies – ranging from medical research to social science – were published using data from one such platform, Amazon’s Mechanical Turk, in 2015 alone.1

The rise of these platforms has also generated considerable commentary about the so-called “gig economy” and the possible impact it will have on traditional notions about the nature of work, the structure of compensation and the “social contract” between firms and workers. Pew Research Center recently explored some of the policy and employment implications of these new platforms in a national survey of Americans.

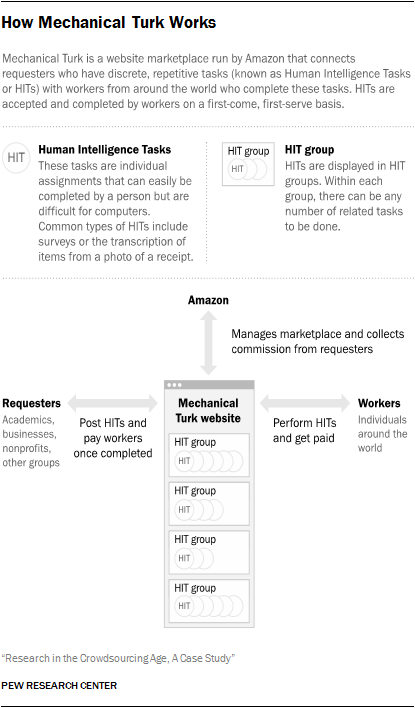

The marketplace of Mechanical Turk has a language all of its own. Most of the following definitions were created by the designers of the site, while a few terms (such as Turkers and task) have become commonly used phrases adapted by some in the Mechanical Turk community.

HIT: The term HIT (or HITs) stands for Human Intelligence Tasks. Each HIT is an individual assignment that a worker can undertake.

HIT group: HIT groups are a collection of tasks (or HITs). The main pages of Mechanical Turk display HIT groups – rather than individual HITs – so that multiple people can perform the same task. For example, a company may want 10 different people to complete the same survey, so they would post a single HIT group that contained 10 HITs. Once all of the HITs within a HIT group have been taken by workers, the HIT group disappears from the site.

Most of this report will refer to HIT groups (as opposed to singular HITs) since they are the way that requesters post assignments and the clearest way to measure activity on the site.

Task: A commonly used synonym for HIT.

Worker: An individual who works on HITs in order to earn money. Workers must register with Amazon and Mechanical Turk. Workers can collect qualifications in order to gain access to a wider range of HIT groups.

Requester: A person, business or organization that posts HITs on Mechanical Turk. Requesters post assignments and determine how much reward to give for the completion of each task. Requesters also assign requirements necessary for a worker to attempt a task and determine if a task was completed in a satisfactory manner. Requesters pay a commission to Mechanical Turk for use of their site. This commission is a percentage of the reward they pay to workers.

Active requester: A term used by Dr. Panagiotis G. Ipeirotis of the New York University Stern School of Business to measure the amount of requesters on the site who are repeatedly posting tasks over time. An active requester is a requester who had previously posted a HIT and would post another HIT later on. For example, a company would be considered an active requester on May 13 if they had posted a HIT prior to that date, and would again on or after May 13.

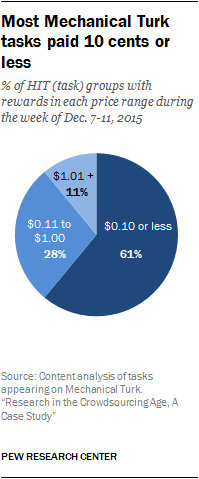

Reward: The amount of money paid by the requester to the worker for the completion of a HIT. These range from “free” to $60 or more, although most rewards are less than 10 cents.

Qualification: A qualification is a property of a worker that represents that worker’s skill, ability or reputation. Requesters may create qualifications that workers must achieve to work on specific HITs. Workers earn these qualifications by fulfilling requirements, which usually involve the completion of a specific test. There are a variety of qualifications. Some requesters will require that workers have demonstrated a successful history on the site. Some qualifications require workers to live in a particular country. And there are specific qualifications that workers can earn such as the “Adult Content Qualification” or “Confidentiality Qualification.”

MTurk: A commonly used abbreviation for the Mechanical Turk site.

Turkers: A commonly used term for the workforce on Mechanical Turk.

Proponents say this technology-driven innovation can offer employers – whether companies or academics – the ability to control costs by relying on a global workforce that is available 24 hours a day to perform relatively inexpensive tasks. They also argue that these arrangements offer workers the flexibility to work when and where they want to. On the other hand, some critics worry this type of arrangement does not give employees the same type of protections offered in more traditional work environments – while others have raised concerns about the quality and consistency of data collected in this manner.

A recent report from the World Bank found that the online outsourcing industry generated roughly $2 billion in 2013 and involved 48 million registered workers (though only 10% of them were considered “active”). By 2020, the report predicted, the industry will generate between $15 billion and $25 billion.

Amazon’s Mechanical Turk is one of the largest outsourcing platforms in the United States and has become particularly popular in the social science research community as a way to conduct inexpensive surveys and experiments. The platform has also become an emblem of the way that the internet enables new businesses and social structures to arise.

In light of its widespread use by the research community and overall prominence within the emerging world of online outsourcing, Pew Research Center conducted a detailed case study examining the Mechanical Turk platform in late 2015 and early 2016. The study utilizes three different research methodologies to examine various aspects of the Mechanical Turk ecosystem. These include human content analysis of the platform, a canvassing of Mechanical Turk workers and an analysis of third party data.

The first goal of this research was to understand who uses the Mechanical Turk platform for research or business purposes, why they use it and who completes the work assignments posted there. To evaluate these issues, Pew Research Center performed a content analysis of the tasks posted on the site during the week of Dec. 7-11, 2015.

A second goal was to examine the demographics and experiences of the workers who complete the tasks appearing on the site. This is relevant not just to fellow researchers that might be interested in using the platform, but as a snapshot of one set of “gig economy” workers. To address these questions, Pew Research Center administered a nonprobability online survey of Turkers from Feb. 9-25, 2016, by posting a task on Mechanical Turk that rewarded workers for answering questions about their demographics and work habits. The sample of 3,370 workers contains any number of interesting findings, but it has its limits. This canvassing emerges from an opt-in sample of those who were active on MTurk during this particular period, who saw our survey and who had the time and interest to respond. It does not represent all active Turkers in this period or, more broadly, all workers on MTurk.

Finally, this report uses data collected by the online tool mturk-tracker, which is run by Dr. Panagiotis G. Ipeirotis of the New York University Stern School of Business, to examine the amount of activity occurring on the site. The mturk-tracker data are publically available online, though the insights presented here have not been previously published elsewhere.

Workers and their use of the site

Among the key findings of our February 2016 opt-in canvassing of Mechanical Turk workers:

- The U.S.-based workers who responded to our canvassing are younger and more educated than U.S. workers in general: 51% of these respondents have a college degree, compared with 36% of working U.S. adults over the age of 18. And 88% of these Turkers are under 50 years old, compared with 66% of employed adults.

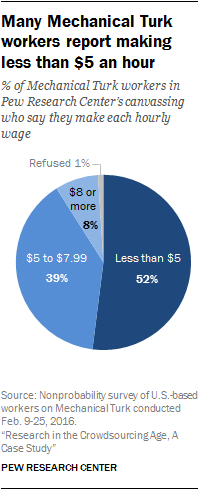

- These Turkers generally report earning less than minimum wage.2 While the federal minimum wage is $7.25 per hour (and is even higher in some jurisdictions), about half of workers (52%) in our sample who were asked about their incomes report earning a rate of less than $5 an hour. Making $4.99 per hour, a worker who performs tasks for 40 hours a week and does not take a vacation for an entire year would earn $10,379.20 (before taxes). Only 8% say they earn $8 per hour or more.3

- Turkers in this sample say they frequently visit the site to complete work, rather than checking in on sporadic occasions. Almost two-thirds of these workers (63%) say they perform tasks on the site “every day.”

- Most of these Turkers in the Pew Research Center sample use the site to supplement other income sources, though a sizable minority relies on the site for the majority of their incomes. More than half (53%) say that “very little” of their incomes come from Mechanical Turk. By contrast, Turkers who earn “all” or “most” of their incomes there make up 25% of workers.

- Older workers in this canvassing tend to earn lower wages, and fewer of them rely exclusively on the site for income. Of the Turkers asked about their incomes, almost three-quarters of those ages 50 or older make less than $5 per hour. By comparison, about half of Turkers under 50 in the sample fall into this category.

- The demographics of Turkers in this study who earn “all” or “most” of their incomes on the site differ from those who use the site to earn a portion of their incomes. Those who use Mechanical Turk for “all” or “most” of their incomes tend to be younger, less educated and live in households with lower incomes compared with those who use the site to supplement other pay. Some 49% of Turkers who get the majority of their incomes from MTurk are 18 to 29 years old, compared with 38% of those the same age with other significant income sources. Around one-third (32%) of those who use the site as their primary incomes have college degrees, compared with 58% of those with college degrees who supplement other incomes. And 61% live in households earning less than $40,000 in 2015, compared with 37% who are in the same income bracket and use the site to supplement other income sources.

The requesters and tasks they post on the site

Our analysis of the tasks posted on Mechanical Turk during the week of Dec. 7-11, 2015, finds that:

- Mechanical Turk was used by a mix of companies and academics. During the week of our analysis of content on the site, academics were responsible for slightly more of the HIT groups posted (36%) than businesses (31%). The remaining tasks were sought by requesters who could not be identified.

- The majority of tasks on the site during this period (61%) were short, repetitive “microtasks” that paid 10 cents or less and could be completed in a few minutes. Employers used Turkers to perform tasks that are easy for humans and difficult for computers. In the week of our analysis, the most popular request (37% of the HIT groups) involved having workers identify information seen in pictures – often sales receipts. These are easy assignments for humans, but still challenging for computers to read and decipher. The second largest task (26%) involved transcription of audio or video files. The classification of images or other information was third at 13% of the total. These tasks included requests for Turkers to determine if a picture contained a particular person or object, or if an audio recording met standards for quality. Surveys comprised a fourth group, also at 13% of the total. Many of these tasks asked Turkers information about themselves and their opinions – similar to the Pew Research Center survey discussed above.

- During the week studied, several companies used the site frequently and made up a large portion of the overall activity. In fact, the top five most active requesters, all businesses, accounted for more than half of all the HIT group postings on the site (53%). Those businesses posted similar HIT groups multiple times throughout the week that were made up of simple, repetitive tasks. The single most active business accounted for 19% of the total HIT groups – almost all of which asked Turkers to record information contained in pictures of sales receipts.

- While more HIT requests came from academics than businesses, these academics were less likely to post more than once during the week reviewed. And nearly all of their requests (89%) consisted of surveys. During the week studied, 107 different academic groups used the site for research. Of those, 70% only posted a single request during that time. By contrast, of the 91 businesses who posted jobs, 58% did so more than once.

The emergence of online outsourcing sheds insights into other dimensions of new tech-enabled, world-spanning labor pools. As is often the case when strangers try to work together, issues of trust and reliability quickly enter the picture. On Mechanical Turk, workers have devised rating systems for evaluating requesters and formed active online communities to share stories, tips and warnings about performing in these new environments. Many requesters have contributed to a growing body of literature with suggestions for how to ensure quality work from large numbers of workers.

The economic factors at play in the Mechanical Turk community are similar to other markets utilizing the “gig economy” model. On the one hand, workers’ wages must be low enough that employers can save money using the sites. On the other hand, wages must be high enough that workers will want to participate. And issues of data quality and reliability will be paramount for researchers using the sites.

This report will cover these issues and explain how the Mechanical Turk marketplace represents emerging labor markets unrestrained by geographic boundaries and physical limitations.