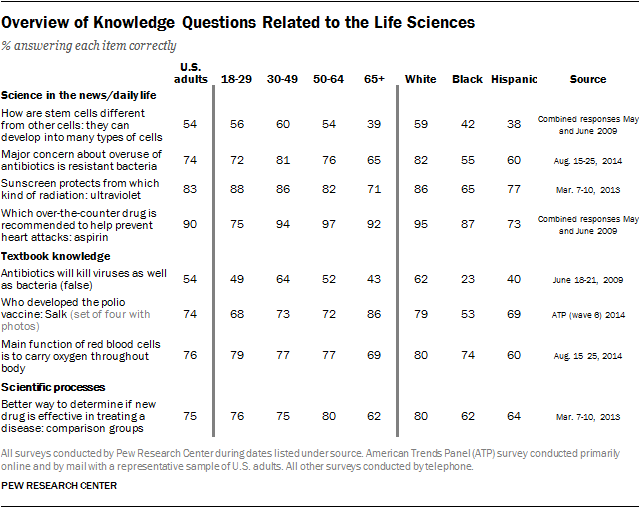

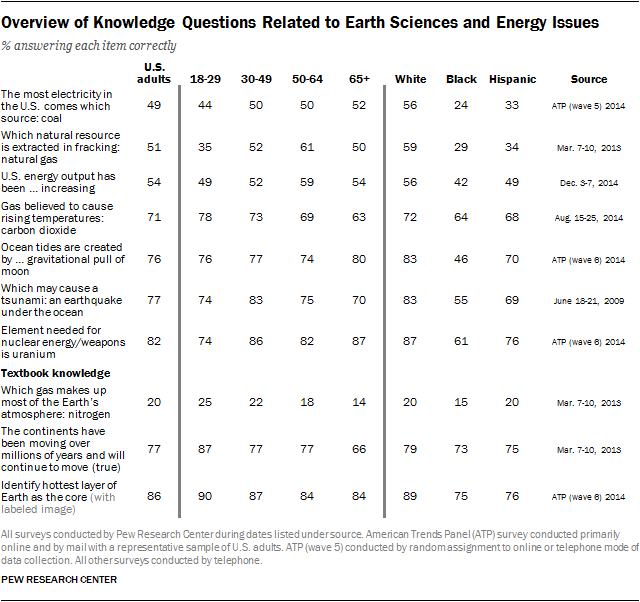

The set of 12 questions from the new Pew Research Center survey is, of course, only a small fraction of the possible topics and approaches to measuring public knowledge and understanding about science. In this section, we show the 12 questions from the new survey alongside other science knowledge questions asked across a handful of past Pew Research surveys conducted since 2009. This overview of findings underscores the caution that how much the public appears to know or not know about science depends on the nature of the questions asked. Some information is widely held, other information much less so. It also shows that the broad patterns described above of differences in science knowledge by educational level and across demographic groups tend to be consistent with the patterns found in earlier surveys that used different science knowledge questions and different survey methods.

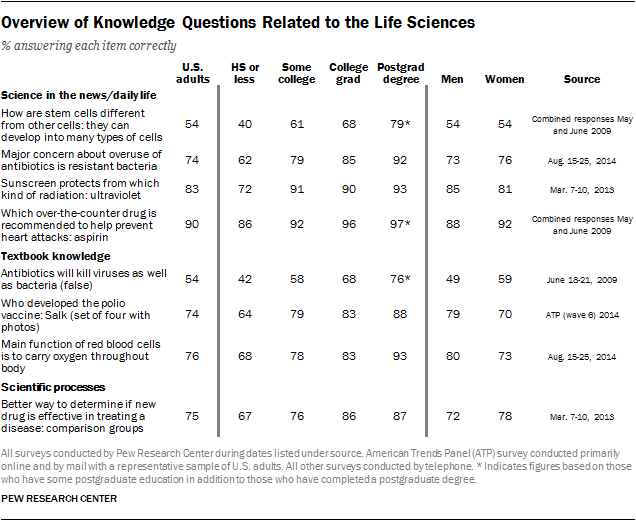

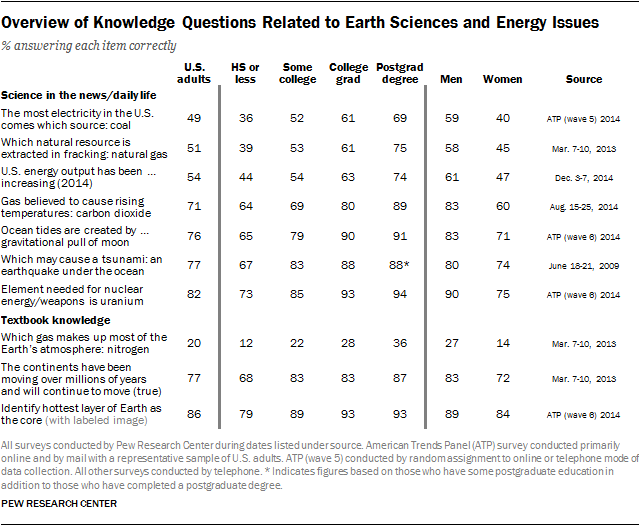

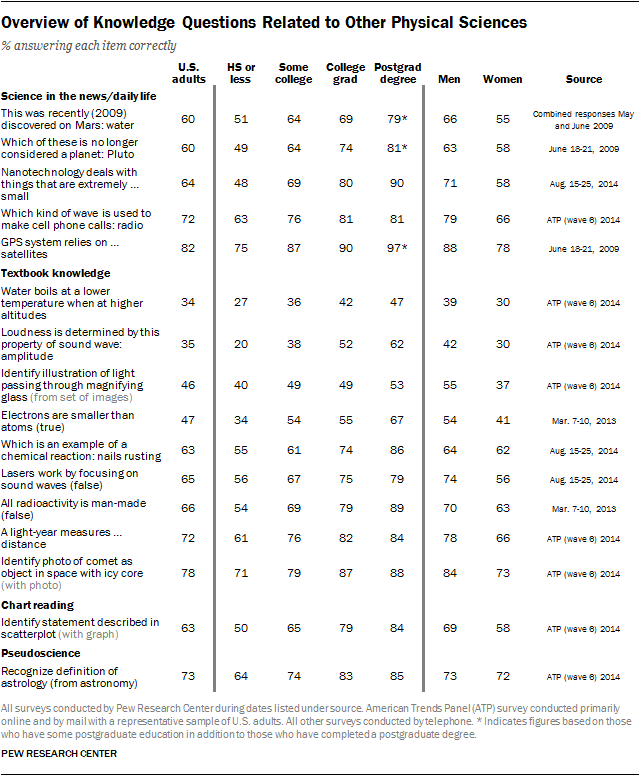

People who happen to know more, on average, about the particular questions on a given survey are expected to know more about other topics within the broad domain of science. But there may be differences depending on the fields of science covered, as well as the extent to which the questions tap information that may be learned from developments in the news or applications of scientific principles in everyday life. Thus, the tables here show the questions grouped into broad topical categories related to the life sciences, earth sciences and energy issues, as well as other physical sciences. The questions are also grouped into broad types, such as those that might come from news coverage of science and technology issues or applications of science in everyday life and those that might be found in science education textbooks.

Knowledge of science facts, especially those typically covered in science textbooks, tends to be stable over time. For example, the average number of items correct on a nine-item scale of factual knowledge of science collected on behalf of the National Center of Science and Engineering Statistics has stayed about same the over the past two decades.20 Topics connected with emerging science and science-related issues covered in the news may be more likely to change over time.

The new Pew Research survey findings were collected primarily online with a nationally representative sample of adults (in the center’s American Trends Panel), so the large majority of respondents completed the survey using a computer or smartphone.21 Most of the past Pew Research surveys with science knowledge questions were conducted by telephone, however. Little is known about how different modes of data collection might influence estimates of science knowledge. Prior to fielding the new science knowledge survey online, we did one mode experiment in which half the respondents from the American Trends Panel were randomly assigned to answer an item about the most common source of electricity in the U.S. via a phone survey, and the other half were presented the question online. There were no significant differences in knowledge across the two modes. For more on mode differences, see the Pew Research report “From Telephone to the Web: The Challenge of Mode of Interview Effects in Public Opinion Polls.”

Readers should also keep in mind that the estimates of public knowledge about science may vary depending on the extent to which respondents are encouraged to guess. Respondents in these surveys were instructed to provide their best guess, even if they were not certain of the correct answer, and while they were able to skip questions, there was no explicit option to say “don’t know.” One recent analysis of science knowledge questions collected on the General Social Survey suggests that instructions that discourage guessing (e.g., by making it easier to record a don’t know response) may provide a more valid measure of science understanding because some of those who guess will provide the correct response by chance without holding a clear understanding of the scientific principles.22

However, some studies of adult knowledge in the political domain have argued that surveys that discourage guessing by offering an explicit “don’t know” option underestimate knowledge because some respondents have meaningful, though partial, information but are less inclined to guess in those situations.23 One recent study of political knowledge argues that visual cues tap into different stores of political knowledge than do questions based only on verbal cues, and that educated respondents may have more of an advantage on verbal than visual knowledge questions. 24

These issues go beyond what we can disentangle here. Despite the limitations of any survey, Pew Research Center’s science knowledge findings provide a nationally representative snapshot of what the public knows on new and older scientific developments, textbook principles covered in K-12 education, and topics discussed in the news. The overall findings and the divides among key demographic groups provides a fresh look at the American public’s science knowledge and may provide new insights for the larger civic effort on science education among both children and adults.