About the Pew Internet & American Life Project

The Pew Internet Project is a nonprofit, non-partisan initiative of the Pew Research Center that explores the impact of the Internet on children, families, communities, the work place, schools, health care, and civic/political life. Support for the project is provided by The Pew Charitable Trusts. More information available at: https://www.pewresearch.org/internet

Question language and Methodology

February 2007 Tracking Survey

Final Topline, 3/12/07

Data for February 15 – March 7, 2007

Princeton Survey Research Associates International for the Pew Internet & American Life Project

Sample: n = 2,200 adults 18 and older

Interviewing dates: 02.15.07 – 03.07.07

- Margin of error is plus or minus 2 percentage points for results based on total sample [n=2,200]

- Margin of error is plus or minus 3 percentage points for results based on internet users [n=1,492]

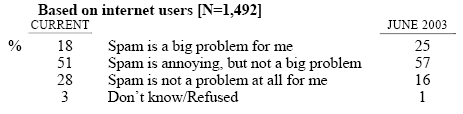

SP1 Which of the following best describes how spam affects your life on the Internet?

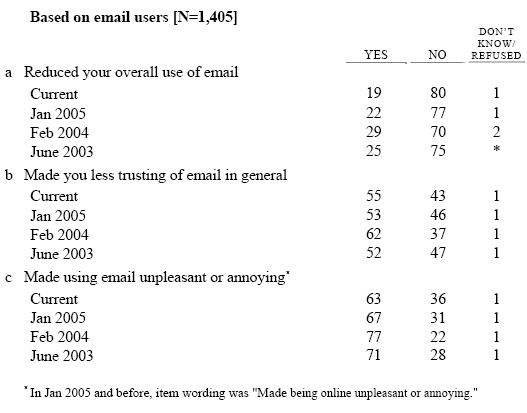

SP2 We’d like to know if unsolicited email, or spam, has affected you in any of the following ways. Has spam…?

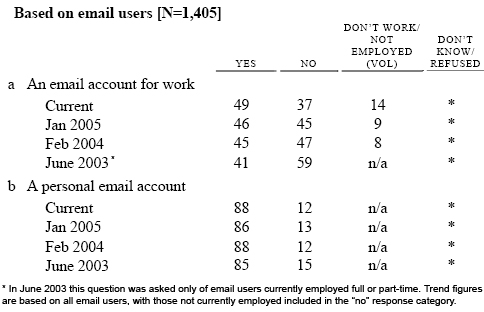

SP4 Thinking about your email… Do you have…

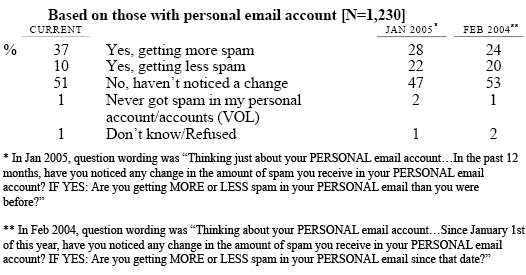

SP5 Thinking just about your PERSONAL email account…In the past 12 months, have you noticed any change in the amount of spam you receive in the INBOX of your PERSONAL email account? IF YES: Are you getting MORE or LESS spam in the INBOX of your PERSONAL email than you were before?

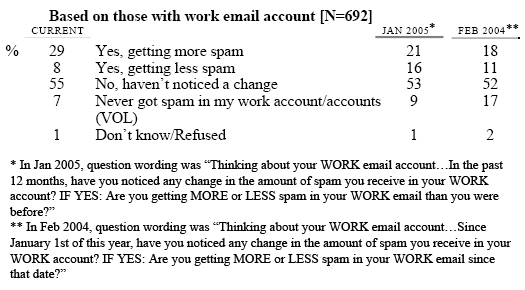

SP6 Thinking just about your WORK email account… In the past 12 months, have you noticed any change in the amount of spam you receive in the INBOX of your WORK account? IF YES: Are you getting MORE or LESS spam in the INBOX of your WORK email than you were before?

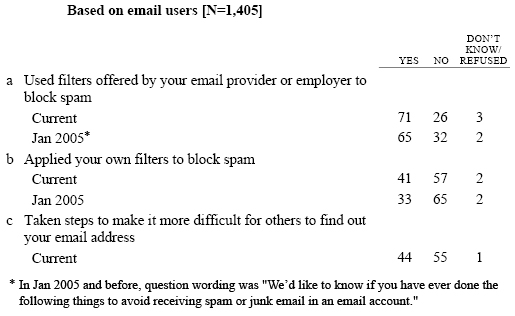

SP18 We’d like to know if you have ever done the following things to keep spam out of your inbox. Have you ever…?

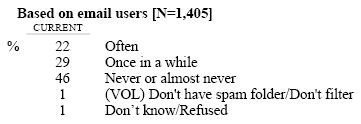

SP19 How often do you check your spam folder for email messages that might have been sent there by mistake? Do you do this…

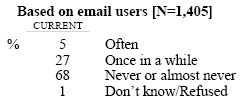

SP20 How often do you unintentionally open an email message without realizing it was spam? Do you do this…

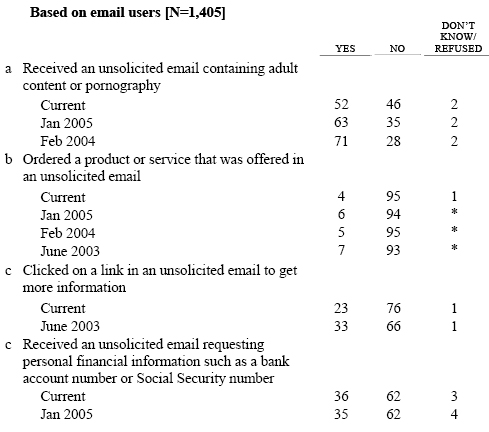

SP3 Thinking about all of the times you’ve received unsolicited email, have you ever…?

Methodology

This report is based on the findings of a daily tracking survey on Americans’ use of the Internet. The results in this report are based on data from telephone interviews conducted by Princeton Survey Research Associates between February 15 to March 7 2007, among a sample of 2,200 adults, 18 and older. For results based on the total sample, one can say with 95% confidence that the error attributable to sampling and other random effects is plus or minus 2.3 percentage points. For results based Internet users (n=1,492), the margin of sampling error is plus or minus 2.8 percentage points. In addition to sampling error, question wording and practical difficulties in conducting telephone surveys may introduce some error or bias into the findings of opinion polls.

The sample for this survey is a random digit sample of telephone numbers selected from telephone exchanges in the continental United States. The random digit aspect of the sample is used to avoid “listing” bias and provides representation of both listed and unlisted numbers (including not-yet-listed numbers). The design of the sample achieves this representation by random generation of the last two digits of telephone numbers selected on the basis of their area code, telephone exchange, and bank number.

New sample was released daily and was kept in the field for at least five days. The sample was released in replicates, which are representative subsamples of the larger population. This ensures that complete call procedures were followed for the entire sample. At least 10 attempts were made to complete an interview at sampled households. The calls were staggered over times of day and days of the week to maximize the chances of making contact with a potential respondent. Each household received at least one daytime call in an attempt to find someone at home. In each contacted household, interviewers asked to speak with the youngest male currently at home. If no male was available, interviewers asked to speak with the youngest female at home. This systematic respondent selection technique has been shown to produce samples that closely mirror the population in terms of age and gender. All interviews completed on any given day were considered to be the final sample for that day.

Non-response in telephone interviews produces some known biases in survey-derived estimates because participation tends to vary for different subgroups of the population, and these subgroups are likely to vary also on questions of substantive interest. In order to compensate for these known biases, the sample data are weighted in analysis. The demographic weighting parameters are derived from a special analysis of the most recently available Census Bureau’s March 2006 Annual Social and Economic Supplement. This analysis produces population parameters for the demographic characteristics of adults age 18 or older, living in households that contain a telephone. These parameters are then compared with the sample characteristics to construct sample weights. The weights are derived using an iterative technique that simultaneously balances the distribution of all weighting parameters.

PSRAI calculates a response rate as the product of three individual rates: the contact rate, the cooperation rate, and the completion rate. Of the residential numbers in the sample, 76 percent were contacted by an interviewer and 41 percent agreed to participate in the survey. Eighty-seven percent were found eligible for the interview. Furthermore, 94 percent of eligible respondents completed the interview. Therefore, the final response rate is 29 percent.