Summary

The September 2005 Tracking Survey (Online Dating Extension), sponsored by the Pew Internet and American Life Project, obtained telephone interviews with a nationally representative sample of 3,215 adults living in continental United States telephone households. The survey was conducted by Princeton Survey Research International. The interviews were conducted in English by Princeton Data Source, LLC from September 14 to December 8, 2005. This report is based on the findings of a daily tracking survey of Americans’ use of the Internet. Statistical results are weighted to correct known demographic discrepancies. The margin of sampling error for the complete set of weighted data is ±1.9%. For results based Internet users (n=2,252), the margin of sampling error is plus or minus 2.3 percentage points. Online daters (n=204) have a margin of sampling error of plus or minus 7.5 percentage points.

Details on the design, execution, and analysis of the survey are discussed below.

Design and Data Collection Procedures

Sample Design

The sample was designed to represent all continental U.S. telephone households. The telephone sample was provided by Survey Sampling International, LLC (SSI) according to PSRAI specifications. The sample was drawn using standard list-assisted random digit dialing (RDD) methodology. Active blocks of telephone numbers (area code + exchange + two-digit block number) that contained three or more residential directory listings were selected with probabilities in proportion to their share of listed telephone households; after selection two more digits were added randomly to complete the number. This method guarantees coverage of every assigned phone number regardless of whether that number is directory listed, purposely unlisted, or too new to be listed. After selection, the numbers were compared against business directories and matching numbers purged.

Contact Procedures

Interviews were conducted from September 14 to December 8, 2005. As many as 10 attempts were made to contact every sampled telephone number. Sample was released for interviewing in replicates, which are representative subsamples of the larger sample. Using replicates to control the release of sample ensures that complete call procedures are followed for the entire sample.

Calls were staggered over times of day and days of the week to maximize the chance of making contact with potential respondents. Each household received at least one daytime call in an attempt to find someone at home. In each contacted household, interviewers asked to speak with the youngest adult male currently at home. If no male was available, interviewers asked to speak with the oldest female at home. This systematic respondent selection technique has been shown to produce samples that closely mirror the population in terms of age and gender.

Weighting and Analysis

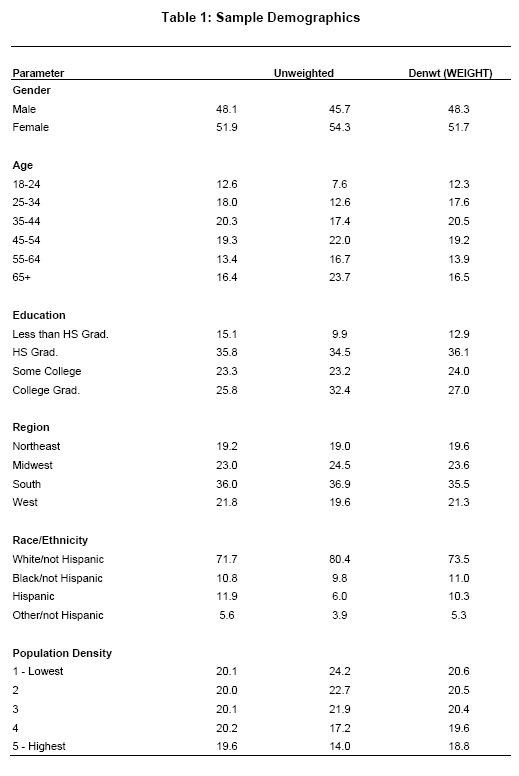

Weighting is generally used in survey analysis to compensate for patterns of nonresponse that might bias results17. The weight variable balances the interviewed sample of all adults to match national parameters for sex, age, education, race, Hispanic origin, region (U.S. Census definitions), and population density. The White, non-Hispanic subgroup was also balanced on age, education and region. These parameters came from a special analysis of the Census Bureau‘s 2004 Annual Social and Economic Supplement (ASEC) that included all households in the continental United States that had a telephone.

Weighting was accomplished using Sample Balancing, a special iterative sample weighting program that simultaneously balances the distributions of all variables using a statistical technique called the Deming Algorithm. Weights were trimmed to prevent individual interviews from having too much influence on the final results. The use of these weights in statistical analysis ensures that the demographic characteristics of the sample closely approximate the demographic characteristics of the national population. Table 1 compares weighted and unweighted sample distributions to population parameters.

Effects of Sample Design on Statistical Inference

Post-data collection statistical adjustments require analysis procedures that reflect departures from simple random sampling. PSRAI calculates the effects of these design features so that an appropriate adjustment can be incorporated into tests of statistical significance when using these data. The so-called “design effect” or deff represents the loss in statistical efficiency that results from systematic non-response. The total sample design effect for this survey is 1.22.

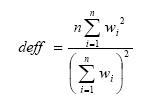

PSRAI calculates the composite design effect for a sample of size n, with each case having a weight, wi, as:

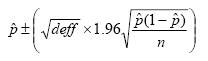

In a wide range of situations, the adjusted standard error of a statistic should be calculated by multiplying the usual formula by the square root of the design effect (√deff). Thus, the formula for computing the 95% confidence interval around a percentage is:

where pˆ is the sample estimate and n is the unweighted number of sample cases in the group being considered.

The survey’s margin of error is the largest 95% confidence interval for any estimated proportion based on the total sample— the one around 50%. For example, the margin of error for the entire sample is ±1.9%. This means that in 95 out every 100 samples drawn using the same methodology, estimated proportions based on the entire sample will be no more than 1.9 percentage points away from their true values in the population. It is important to remember that sampling fluctuations are only one possible source of error in a survey estimate. Other sources, such as respondent selection bias, questionnaire wording and reporting inaccuracy, may contribute additional error of greater or lesser magnitude.

Response Rate

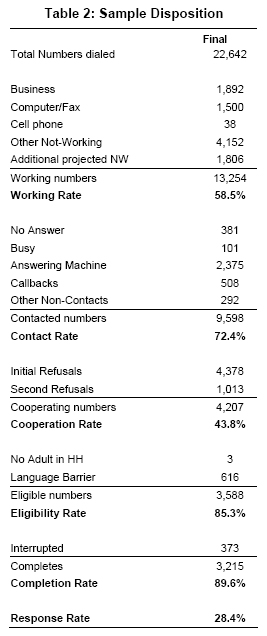

Following is the full disposition of all sampled telephone numbers:

Table 2 reports the disposition of all sampled telephone numbers ever dialed from the original telephone number sample. The response rate estimates the fraction of all eligible respondents in the sample that were ultimately interviewed. At PSRAI it is calculated by taking the product of three component rates:18

- Contact rate – the proportion of working numbers where a request for interview was made – of 72 percent19

- Cooperation rate – the proportion of contacted numbers where a consent for interview was at least initially obtained, versus those refused – of 44 percent

- Completion rate – the proportion of initially cooperating and eligible interviews that were completed – of 90 percent

Thus the response rate for this survey was 28 percent.